Image

ImageLiquid AI's LFM2.5: Eight Engineering Levers for SOTA LLMs

IMAGE

llm developmentliquid ailfm2.5 modelai engineeringmodel optimizationon-device ai

February 3, 2026

Summary

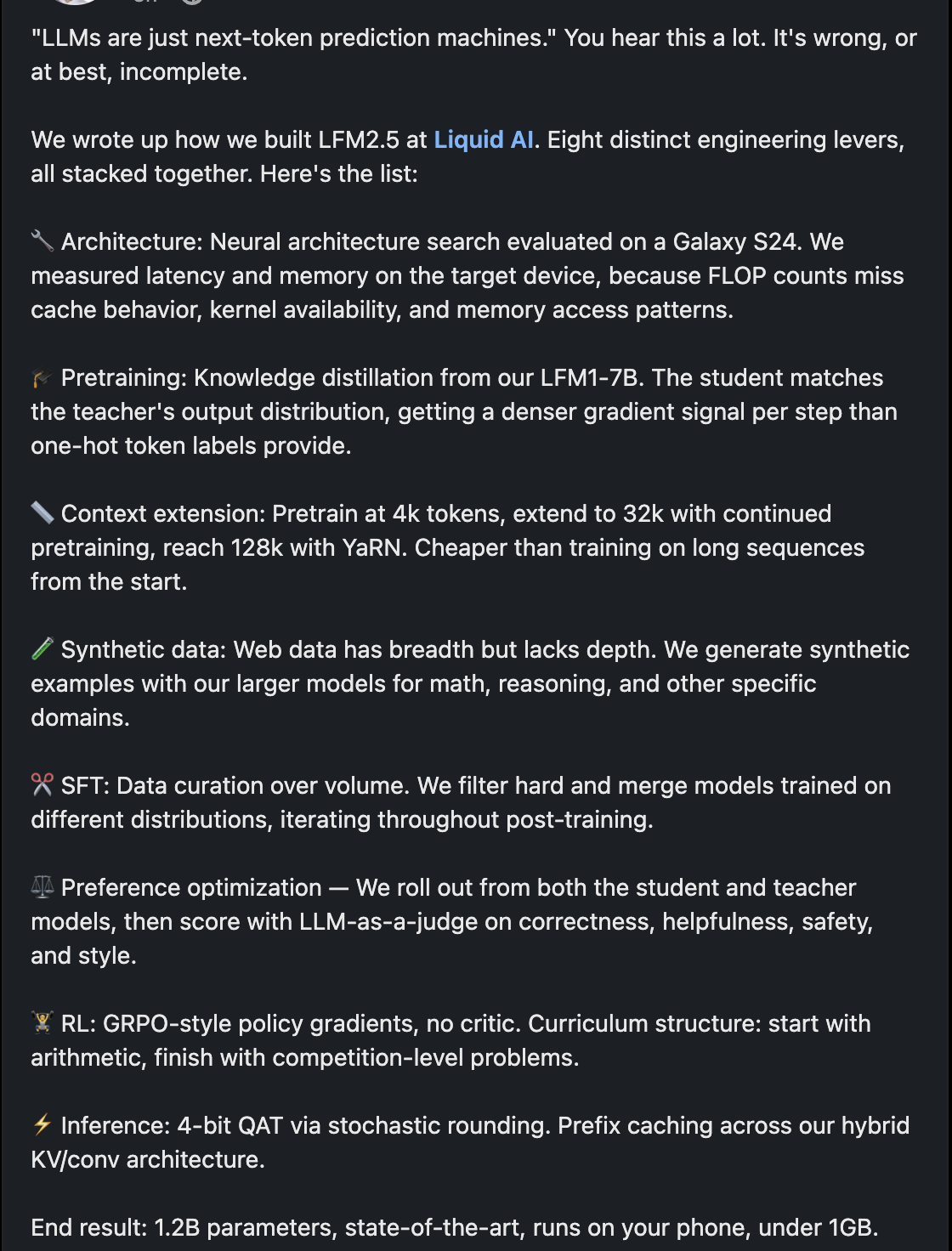

Liquid AI developed LFM2.5, a state-of-the-art large language model (LLM) with 1.2 billion parameters, designed to run efficiently on mobile devices (under 1GB). The document refutes the oversimplified view of LLMs as mere "next-token prediction machines," detailing eight distinct engineering levers employed in LFM2.5's creation.

The development process involved:

- Architecture: Neural architecture search, evaluated on a Galaxy S24, prioritizing on-device latency and memory over FLOP counts.

- Pretraining: Knowledge distillation from their LFM1-7B model, providing denser gradient signals.

- Context Extension: Initial pretraining at 4k tokens, extended to 32k, and then to 128k using YaRN (Yet another RoPE-based Network) for cost efficiency.

- Synthetic Data: Generation of synthetic examples using larger models to add depth in domains like math and reasoning, complementing web data.

- SFT (Supervised Fine-Tuning): Emphasized data curation and filtering over volume, merging models trained on diverse distributions.

- Preference Optimization: Rolling out from both student and teacher models, then scoring outputs using an LLM-as-a-judge for correctness, helpfulness, safety, and style.

- RL (Reinforcement Learning): Utilized GRPO-style policy gradients without a critic, employing a curriculum structure from arithmetic to competition-level problems.

- Inference: Implemented 4-bit QAT (Quantization Aware Training) via stochastic rounding and prefix caching across a hybrid KV/conv architecture.

This comprehensive approach resulted in a highly optimized LLM capable of running on a phone, challenging conventional LLM development paradigms.

Build your own second brain

Save and connect content like this in your personal library.

Save any link or doc

One place for everything

AI summaries and topics

Understand at a glance

Your second brain

Search and connect ideas

Join thousands building their second brain