Image

ImageMUVERA: Efficient Multi-Vector Embeddings for RAG Systems

IMAGE

Summary

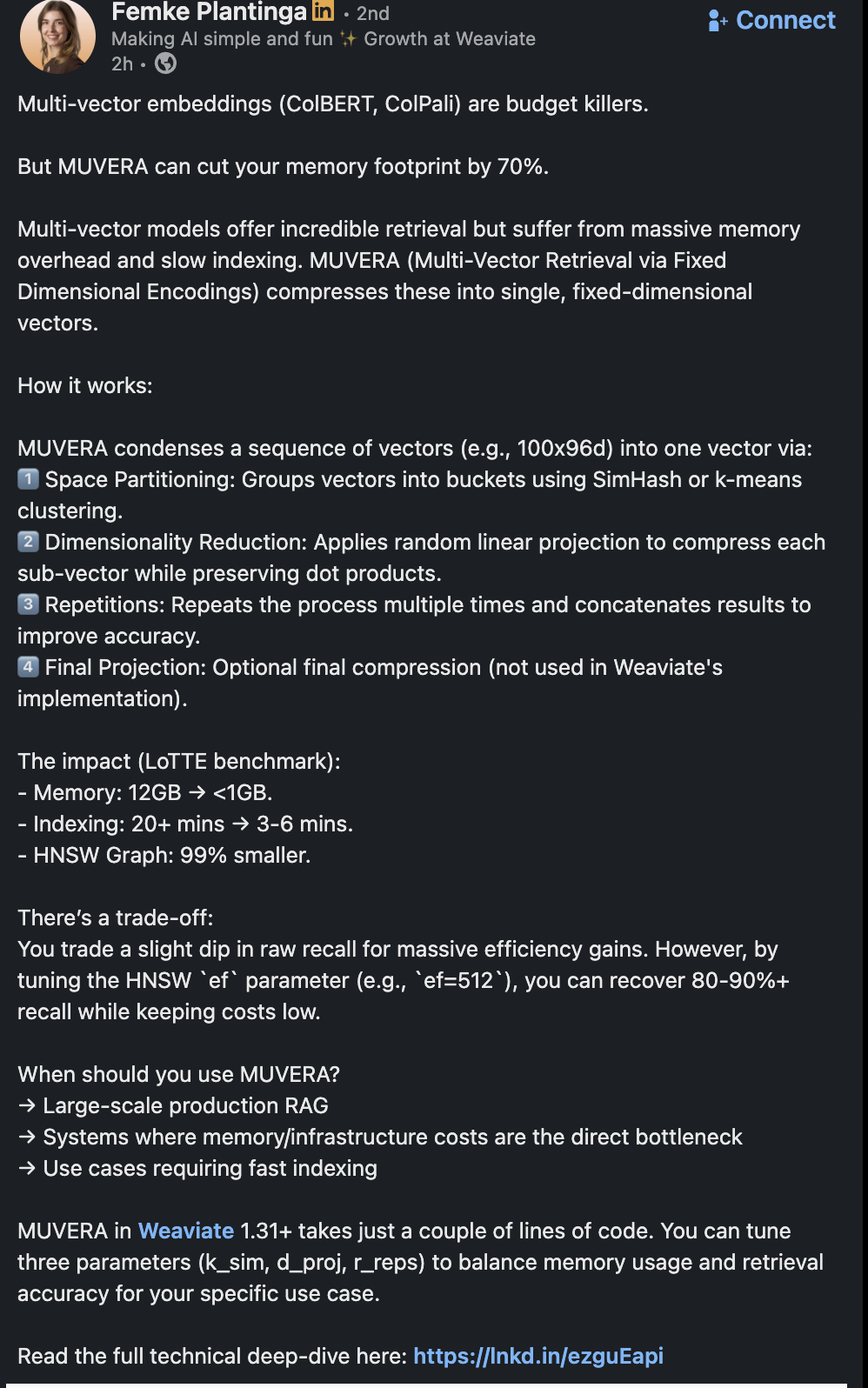

The document, a LinkedIn post by Femke Plantinga, introduces MUVERA (Multi-Vector Retrieval via Fixed Dimensional Encodings), a technology designed to address the significant memory overhead and slow indexing issues associated with multi-vector embeddings like ColBERT and ColPali. MUVERA compresses these large embeddings into single, fixed-dimensional vectors, offering massive efficiency gains for large-scale production RAG systems.

Multi-vector models provide excellent retrieval capabilities but are "budget killers" due to their massive memory overhead and slow indexing. MUVERA aims to cut the memory footprint by 70%. The impact, as shown on the LoTTE benchmark, includes memory reduction from 12GB to less than 1GB, indexing time cut from over 20 minutes to 3-6 minutes, and a 99% smaller HNSW Graph. While there's a slight dip in raw recall, this can be recovered by tuning the HNSW ef parameter (e.g., ef=512) to achieve 80-90%+ recall while maintaining low costs. MUVERA is recommended for large-scale production RAG, systems bottlenecked by memory/infrastructure costs, and use cases requiring fast indexing. It is available in Weaviate 1.31+ and allows tuning three parameters: k_sim, d_proj, and r_reps to balance memory and accuracy.

How MUVERA works:

- Space Partitioning: Groups vectors into buckets using SimHash or k-means clustering.

- Dimensionality Reduction: Applies random linear projection to compress each sub-vector while preserving dot products.

- Repetitions: Repeats the process multiple times and concatenates results to improve accuracy.

- Final Projection: An optional final compression step, not used in Weaviate's current implementation.

Key Entities:

- Person: Femke Plantinga

- Companies/Brands: Weaviate, LinkedIn

- Technologies/Concepts: Multi-vector embeddings, ColBERT, ColPali, MUVERA (Multi-Vector Retrieval via Fixed Dimensional Encodings), SimHash, k-means clustering, Random linear projection, LoTTE benchmark, HNSW Graph, RAG (Retrieval Augmented Generation).

Tools and Technologies:

- Software/Platforms: Weaviate (version 1.31+), LinkedIn

- Algorithms/Techniques: SimHash, k-means clustering, Random linear projection, HNSW (Hierarchical Navigable Small World) Graph.

Facts and Data:

- Memory footprint reduction: 70%

- Memory usage on LoTTE benchmark: 12GB reduced to <1GB

- Indexing time on LoTTE benchmark: 20+ minutes reduced to 3-6 minutes

- HNSW Graph size reduction: 99% smaller

- Recall recovery: 80-90%+ by tuning HNSW

ef=512 - Tunable parameters in Weaviate:

k_sim,d_proj,r_reps

Build your own second brain

Save and connect content like this in your personal library.

Save any link or doc

One place for everything

AI summaries and topics

Understand at a glance

Your second brain

Search and connect ideas

Join thousands building their second brain